Home Tags Posts tagged with "google glass"

google glass

Snapchat has launched Spectacles, the company’s first gadget – sunglasses with a built-in camera.

Spectacles will go on sale later this year priced at $130.

The glasses will record up to 30 seconds of video at time.

As part of the announcement, Snapchat is renaming itself to Snap, Inc.

Image source Snapchat

The company’s renaming decision underlined its apparent ambition to go beyond the ephemeral messaging app, a product which is highly popular with young people.

An article published by the Wall Street Journal on September 23 showed Snap’s 26-year-old creator Evan Spiegel in a series of pictures taken by legendary fashion photographer Karl Lagerfeld.

In an interview, Evan Spiegel explained his rational for creating Spectacles.

“It was our first vacation, and we went to [Californian state park] Big Sur for a day or two. We were walking through the woods, stepping over logs, looking up at the beautiful trees.

“And when I got the footage back and watched it, I could see my own memory, through my own eyes – it was unbelievable.

“It’s one thing to see images of an experience you had, but it’s another thing to have an experience of the experience. It was the closest I’d ever come to feeling like I was there again.”

On September 24, Snap released some limited information about how the glasses will work.

Footage will be recorded in a new, circular format which can be viewed in any orientation, the company said. The battery on the device will last around a day.

A light on the front of the device will indicate to people nearby when the glasses are recording.

Prior to confirmation from Snap about the product, Business Insider published a promotional video it found on YouTube showing the product. The video has since been taken down.

Spectacles will remind many of Google Glass, an ill-fated attempt by the search giant to create smart glasses.

While Google Glass did get into the hands of developers around the world – at a cost of $1,500 each – the device never came close to being a consumer product. Google eventually halted development, but insisted the idea was not dead.

According to the Wall Street Journal, Snap is not treating Spectacles as a major hardware launch, rather a fun toy that will have limited distribution.

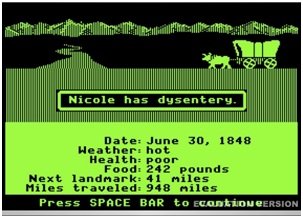

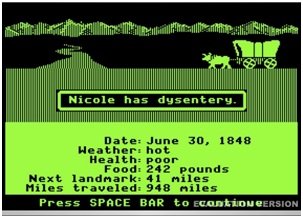

Oregon Trail Screenshot by The Pug Father from Flickr Creative Commons.

Digital gaming has come a long way since the days of Oregon Trail. Thanks to cloud computing and mobile devices, games pack in a lot more action and a lot less dysentery.

So what’s next for mobile cloud gaming? The answer: exciting things. Professors from the University of British Columbia and China’s Huazhong University of Science and Technology have teamed up to deliver their predictions about Mobile Cloud Gaming: the Next Generation.

1. Dynamic Cloud Integration

One of the biggest drawbacks of mobile gaming is the bandwidth required to execute complex visuals. It’s not such a big deal when you’re using a Wi-Fi connection, but over a cellular connection, data gets expensive. In a mobile browser game, heavy resource usage eats up battery life. Dynamic cloud integration finds a happy medium between bandwidth and battery by optimizing where game components run.

When it’s most efficient for game components to run locally, the cloud server onloads certain functions to the device. Dynamic cloud integration will provide ways for the mobile client the flexibility to offload functions back to the cloud. Meanwhile, both the cloud server and the device constantly monitor usage to deliver optimal resource usage. Players get better performance and longer periods of play.

2. Cross-Platform Gaming

Cloud computing already makes it possible to save games and play the saved game on a different device. Cross-platform gaming will improve to the point that switching from a desktop to mobile device becomes seamless.

In the future, every mobile game, as part of its standard feature set, will optimize saved games for different screen resolutions and device inputs. Online attackers will also learn to optimize malware for seamless transition within the gaming environment, making Android security more important than ever.

3. Context-Aware Gaming

With context-aware gaming, your mobile device uses its GPS, camera, accelerometer, and other elements to adapt an existing game to elements of your surroundings. Imagine sitting in the passenger seat and playing a simple game like Traffic Racer, in which you’re racing down terrain similar to the terrain surrounding you, or playing a game that changes based on multi-player inputs and the surroundings of different players.

Based on time of day, location, and your movements, your games will automatically deliver novel elements. A wave of your hand will release a magic spell. Game elements will respond to physical manifestations of your state of mind, like elevated heart rate and rapid breathing. One classic example of context-aware gaming is the 2003 release Mogi, which let players scavenger hunt for virtual objects within their existing physical environments.

4. Augmented Reality

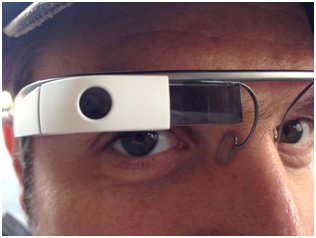

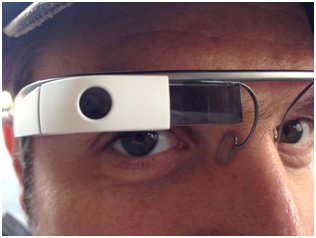

Google Glass image by Michael Praetorius

Imagine donning your Google Glass and playing a hands-free game while seeing either a live or indirect view of the real world. If you’re coordinated enough, you’ll be able to play your game while walking to work — without walking into a lamppost.

Now, imagine walking to work and staring not at the people around you but at a gamified version of reality. For example, the game Human Pacman superimposes dots, power pellets, and scrumptious floating fruit within your normal field of vision. Your movements, including movements of your head, determine where Pacman goes next.

Augmented gaming, which incorporates many context-aware elements, will transform the world as you see it into a video feed. In a virtual sense, you’ll eventually be able to punch the person who cut in front of you at Starbucks — accompanied by your own theme music and a balloon that says “KAPOW!” — without moving anything but eye muscles.

5. Multi-Player Cloud Gaming

Desktop computers and consoles offer plenty of multi-player gaming opportunities. Games like Minecraft Pocket Edition allow players sharing a mobile LAN to interact within different worlds. As cloud gaming technology and streaming become more sophisticated, you’ll play mobile multi-player scenarios not only with the person sitting next to you but also with people all over the world.

All Hail the Future

Thanks to the cloud, mobile gaming requires only a thin client, has access to unlimited resources, and allows for seamless play across many devices. Next-gen cloud gaming advances will reduce network dependence, lower bandwidth consumption, and overcome limited mobile browser resources.

As cloud and mobile device advancements make gaming more immersive and less screen dependent, the whole world becomes a stage for a game. Let’s just hope when you catch dysentery in Future Mobile Oregon Trail, it’s not a context-aware development.

Oregon Trail Screenshot by The Pug Father from Flickr Creative Commons.

Woman using computer and mobile devices image by Antonio Guillem from Shutterstock.

Google Glass image by Michael Praetorius from Flickr Creative Commons.

Google has revealed for the first time what view users will get through its “Project Glass” wearable computer.

1. Google Glass responds to voice commands

Google Glass will perform many of the same tasks as smartphones, except the spectacles respond to voice commands instead of fingers touching a display screen.

2. Run on Google’s Android

The glasses include a tiny display screen attached to a rim above the right eye and run on Google’s Android operating system for mobile devices.

3. Take picture or record videos while on the move

Because no hands are required to operate them, Google Glass will make it easier for people to take pictures or record video wherever they might be or whatever they might be doing.

4. Online searches can be easily conducted

Online searches also can be more easily conducted by just telling Google Glass to look up a specific piece of information. Google’s Android system already has a voice search function on smartphones and tablet computers.

Google Glass will perform many of the same tasks as smartphones, except the spectacles respond to voice commands instead of fingers touching a display screen

5. Google Glass can synch to the Internet

The eyewear features built-in camera, microphone and speaker technology and can synch to the Internet using wireless connections.

6. Video through the eyes of wearers can be streamed live

As with the sky divers, cyclists, and wall-walkers who took part in the Google developers conference stunt, video through the eyes of wearers can be streamed live on Google’s social network.

7. Google Glass will come in 5 colors

Google said all of the footage was captured through Project Glass, which will come in five colors – black, gray, blue, red or white and have removable shades.

8. Google Glass for mass market will cost less than $1,500

Google co-founder Sergey Brin said the mass-market version of Google Glass will cost less than $1,500, but more than a smartphone.

9. Google first began developing glasses in 2010

Sergey Brin has been overseeing the work on Google Glass, which the company first began developing in 2010 as part of a secretive company division now known as Google X.

10. Google Glass to hit market in 2014

Google Glass is at the forefront of a new wave of technology known as “wearable computing’ and should hit the market in a little more than a year. The company hopes it will someday make fumbling with smartphones obsolete.

[youtube V6Tsrg_EQMw]

Developers working on apps for Google Glass have been informed they will not be allowed to place ads within the device’s display.

The newly-published terms and conditions for developers working on Google Glass also prohibit companies charging for apps.

The smart glasses, which have a five megapixel camera and voice-activated controls, have started to be shipped.

The first devices will go to developers and “Glass Explorers”.

Google held a competition earlier this year inviting potential users to come up with ways to use the device, while developers have been eager to be among the first to try out the technology.

Developers working on apps for Google Glass have been informed they will not be allowed to place ads within the device’s display

As part of the announcement, Google also gave the first official details of the device’s specifications.

The bone conduction transducer allows the wearer to hear audio without the need for in-ear headphones – sound waves are instead delivered through the user’s cheekbones and into the inner ear.

Google promises a battery lasting for “one full day of typical use”.

Google Glass display is the equivalent, the company says, of looking at a 25 in (63 cm) high-definition screen from eight feet away. The device is able to record video at a resolution of 720p.

It has 16GB on-board storage, and connects with other mobile devices via Bluetooth and Wi-Fi.

To date, it is privacy groups that have offered the strongest dissenting view against Google’s plans with Glass.

One campaigner from a group called Stop The Cyborgs, wrote “We want people to actively set social and physical bounds around the use of technologies and not just fatalistically accept the direction technology is heading in.”

He predicted that the focus of coverage about the device would shift from talking about the “amazing new gadget that will improve the world” to “the most controversial device in history”.

For developers, that controversy could begin with wondering how exactly they will be able to make money from the device.

Also keeping an eye on the excitement generated by Google will be Japanese firm Telepathy Inc.

Their device, the Telepathy One, has been touted as a possible competitor to Google Glass.

Chinese search giant Baidu has also confirmed it is working on a Glass-like project – but details are so far scant.

[youtube DSgF0rpGI9E]

A group called “Stop The Cyborgs” warns that Google Glass and other augmented reality gadgets risk creating a world in which privacy is impossible.

Stop The Cyborgs wants limits put on when headsets can be used.

It has produced posters so premises can warn wearers that the glasses are banned or recording is not permitted.

The campaign comes as politicians, lawyers and bloggers debate how the gadgets will change civil society.

Stop The Cyborgs warns that Google Glass and other augmented reality gadgets risk creating a world in which privacy is impossible

Based in London, the Stop The Cyborgs campaign began at the end of February and the group did not expect much to happen before the launch of Google Glass in 2014.

However, the launch coincided with a push on Twitter by Google to get people thinking about what they would do if they had a pair of the augmented reality spectacles. The camera-equipped headset suspends a small screen in front of an owner and pipes information to that display. The camera and other functions are voice controlled.

Google’s push, coupled with the announcement by the 5 Point Cafe in Seattle to pre-emptively ban users of the gadget, has generated a lot of debate and given the campaign a boost.

Posters produced by the campaign that warn people not to use Google Glass or other personal surveillance devices had been downloaded thousands of times.

In addition, coverage of the Glass project in mainstream media and on the web had swiftly turned from “amazing new gadget that will improve the world” to “the most controversial device in history”.

The limits that the Stop The Cyborg campaign wants placed on Google Glass and similar devices would involve a clear way to let people know when they are being recorded.

In a statement, Google said: “We are putting a lot of thought into how we design Glass because new technology always raises important new issues for society.”

“Our Glass Explorer program will give all of us the chance to be active participants in shaping the future of this technology, including its features and social norms,” it said.

Already some US states are looking to impose other limits on augmented reality devices. West Virginia is reportedly preparing a law that will make it illegal to use such devices while driving. Those breaking the law would face heavy fines.

New details about Google’s eagerly-anticipated smart glasses have been released by the company in a YouTube video.

How It Feels YouTube video uploaded by the company shows Google Glass in action – including the interface which appears in the wearer’s line of sight.

The search giant has also opened up the trial of the product to “creative individuals” and developers.

Google co-founder Sergey Brin was recently spotted on New York’s subway testing the device.

The product was first unveiled as part of a demonstration at a Google launch event last year where developers were offered early access to the device for $1,500.

The company is now inviting people in the US to use the hashtag #ifihadglass to suggest ways they would make use of the headset.

“We’re looking for bold, creative individuals who want to join us and be a part of shaping the future of Glass,” Google said.

“We’re still in the early stages and, while we can’t promise everything will be perfect, we can promise it will be exciting.”

New details about Google’s eagerly-anticipated smart glasses have been released by the company in a YouTube video

The demo video showed how Glass can be used to take pictures and record video, as well as share content directly via email or social networks.

Voice commands such as “OK, Glass, take a picture” were used to control the device.

Other features appeared to include Skype-like video chats, and other related information such as weather reports and map directions.

All of this information appeared in a small, translucent square in the top right of the wearer’s field of vision.

The display is considerably less intrusive than previously published concept videos.

Wearable technology is seen as a major growth area for hardware makers in 2013 and beyond.

In 2008, Apple patented a laser-based “head mounted display system” that it suggested could stream video from its iPod, among other features.

Other patents obtained by Sony and Microsoft allow for creation of miniature displays to go over users’ eyes.

Oakley recently launched Airwave – ski goggles with built-in sensors which provide information on an in-built screen about an owner’s speed, the size of their jumps and what music they are listening to.

Away from the head, the newly released Pebble watch links directly to a smartphone – a concept Apple is also rumored to be working on.

[youtube v1uyQZNg2vE]

[youtube Ir_83_3hAuc]